ChatGPT

The personal computer. Google search. The smart phone. Lifelike chat bots. Another generation-defining technology has arrived.

We've officially crossed the Rubicon.

We're rapidly approaching the event horizon.

And The Singularity is within sight.

I'm writing, of course, about the latest release of ChatGPT (GPT-3.5), the natural language processing tool from OpenAI.

What is ChatGPT?

ChatGPT is a chat bot.

But not your ISP's crappy chat bot that tries to get you to bundle your internet, phone, and TV when all you want to know is why your internet connection is too slow to stream the latest episode of Resident Alien. No, ChatGPT isn't spitting out the same few dozen canned responses based on a handful of pre-programmed keywords.

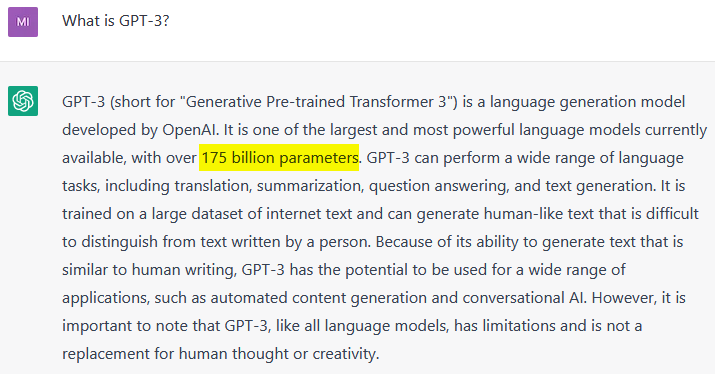

It's had a bit more training than that. How much more? Let's ask it!

(Note that I had to ask ChatGPT about its previous iteration–GPT-3–as the current version did not exist when it was being trained. In other words, ChatGPT is not self-aware...yet.)

[MIKE]: What is GPT-3?

[ChatGPT]: GPT-3 (short for "Generative Pre-trained Transformer 3") is a language generation model developed by OpenAI. It is one of the largest and most powerful language models currently available, with over 175 billion parameters. GPT-3 can perform a wide range of language tasks, including translation, summarization, question answering, and text generation. It is trained on a large dataset of internet text and can generate human-like text that is difficult to distinguish from text written by a person. Because of its ability to generate text that is similar to human writing, GPT-3 has the potential to be used for a wide range of applications, such as automated content generation and conversational AI. However, it is important to note that GPT-3, like all language models, has limitations and is not a replacement for human thought or creativity.

The Lady Doth Protest Too Much, Methinks

My favorite part of the above ChatGPT response is this:

However, it is important to note that GPT-3, like all language models, has limitations and is not a replacement for human thought or creativity.

That sounds exactly like what a super-intelligent AI would say...moments before running amok and taking over the world.

In all seriousness, though, it is important to remember that ChatGPT is not capable of original thought. Rather, it is "simply" rearranging words into a meaningful response based on the user's text and the 175,000,000,000 other inputs on which it was trained.

Wisdom of the Crowd, But on Lyle Alzado-Levels of Steroids

Ultimately, ChatGPT is nothing more than the next (enormously large) iteration in harnessing the wisdom of the crowd.

Sometimes, the wisdom of the crowd produces spectacularly positive results:

- Wikipedia

- Google search

- Political betting markets

Other times, not so much:

- The Salem witch trials

- The Tulsa race massacre

- The Holocaust

My point is that just because a lot of people believe something, that does not necessarily make it good or true. Likewise, you should not blindly trust ChatGPT's output.

Examples of Good Information

Sometimes it can be pretty good. Consider the following definitions of Access and VBA.

What is Microsoft Access?

[MIKE]: What is Microsoft Access?

[ChatGPT]: Microsoft Access is a database management system (DBMS) that is part of the Microsoft Office suite of productivity software. It is used to store, organize, and manage data, and is particularly well-suited for managing large amounts of structured data (data that is organized in a specific way and follows a predetermined set of rules).

Access allows users to create and manipulate data in the form of tables, queries, forms, and reports. It also includes tools for importing and exporting data to and from other databases and for creating relationships between different data elements.

Access is often used by small to medium-sized businesses, as well as by individuals and organizations that need to manage large amounts of data and generate reports from that data. It is a powerful and flexible tool that can be used for a wide range of data management tasks, and is particularly useful for creating custom databases and applications.

Honestly, I was expecting a lot more anti-Access sentiment. I was pleasantly surprised.

What is VBA?

[MIKE]: What is VBA?

[ChatGPT]: VBA stands for Visual Basic for Applications. It is a programming language that is included with Microsoft Office applications such as Microsoft Excel, Microsoft Access, and Microsoft Word.

VBA is used to create custom programs and macros that can automate tasks and perform complex calculations within these applications. It is a powerful and flexible tool that allows users to create custom solutions to meet their specific needs.

To use VBA, you need to be familiar with the Visual Basic programming language and the specific object model of the Office application you are working with. VBA code can be written and edited in the VBA editor, which is built into Office applications. The VBA editor includes a debugger that can be used to test and troubleshoot code.

VBA is a useful tool for those who need to automate tasks or perform complex calculations within Office applications, or who want to create custom solutions using these applications. It is an important part of the Microsoft Office suite of productivity tools and is widely used by businesses and individuals around the world.

I'd say that's also pretty even-handed and accurate.

Examples of Bad Information

You know the saying, "Garbage in, garbage out"?

Well, with machine learning, a more accurate phrase might be, "Small sample sizes in, garbage out."

With 175 billion inputs, where can one be expected to find a small sample size (but one that is greater than zero)? How about among relatively obscure emerging technologies, like, say, twinBASIC?

What is twinBASIC?

I asked ChatGPT this question multiple times (there's a handy [Regenerate Response] button). Each time it claimed a different person or company owned the project, but none of them correctly identified the actual developer, Wayne Phillips.

Here are excerpts from some of ChatGPT's responses:

- "It was developed by the company CMD microcomputer GmbH..."

- "It is a relatively new programming language that was developed by Andreas Filsinger and released in 2019." (who even is that?)

- "TwinBASIC is a programming language and integrated development environment (IDE) developed by Jimval Technologies."

- "I'm sorry, but I'm not familiar with a programming language called 'twinBASIC.'"

- "It was developed by Twin Peaks Software, a company based in the United Kingdom."

- "twinBASIC is an open-source project, which means that the source code is freely available and can be modified and distributed by anyone." (I bet that's news to Wayne)

The closest ChatGPT ever got to the truth was when it said it was developed by "a company based in the United Kingdom." Though I think that was probably just luck.

Avoiding Harmful Information

After earlier natural language processing efforts reflected the sometimes ugly side of unvarnished humanity–in the form of racist and sexist tweets from Microsoft's short-lived AI Twitterbot–OpenAI has learned from others' mistakes:

"...releasing our models through an API also allows us to monitor for and respond to misuse of the technology. We are carefully evaluating how these models are being used and behave in the real world and are working to build the best possible safeguards to identify and reduce problems."

Another example of OpenAI's commitment to avoid harmful output was included at the bottom of its announcement article, under the "Limitations" heading:

While we’ve made efforts to make the model refuse inappropriate requests, it will sometimes respond to harmful instructions or exhibit biased behavior. We’re using the Moderation API to warn or block certain types of unsafe content, but we expect it to have some false negatives and positives for now. We’re eager to collect user feedback to aid our ongoing work to improve this system.

Aside from these overtly harmful responses, there's a more subtle–and more profound–danger: the danger of uninformed usage.

The Dangers of Uninformed Usage

ChatGPT will answer almost any question you throw at it with a tone of authority.

Whether the response is actually correct is a different story. For most popular topics, the responses are overwhelmingly accurate. That's because the large and diverse training data minimize the noise of individual outliers.

This excerpt from the Problems section on the "Wisdom of the crowd" Wikipedia page is instructive:

"Averaging can eliminate random errors that affect each person's answer in a different way, but not systematic errors that affect the opinions of the entire crowd in the same way. So for instance, a wisdom-of-the-crowd technique would not be expected to compensate for cognitive biases."

While counterintuitive, the overwhelming accuracy of ChatGPT's responses contributes to the danger of relying on its output. I call this the Software Reliability Paradox:

As software reliability increases, so too does the degree of harm it has the potential to create.

Over time, users become conditioned to accept reliable software's results as infallible. Thus, when the software produces an invalid result, it can easily go unnoticed.

Interestingly, unreliable software is immune to this phenomenon, as users become conditioned to distrust any of its outputs.

Thus, as AI models continue to improve in accuracy, their occasional inaccuracies will cause greater and greater harm.

The New Role of Trusted Authorities

This latest iteration of ChatGPT will make it increasingly easy for content creators to generate social media posts, blog articles, and code samples.

This AI-generated content will carry a tone of authority, even if its conclusions are nonsense. The good content creators will transition into a role of part-time copy editor, letting the AI take over the tedious work of creating large blocks of content.

The ability to read AI-generated content, recognize its mistakes, and correct them, is what will separate the amateur content creators from the pros.

As a reader, you'll have to learn to tell the difference. Best of luck with that.

It's a brave new world!

External references

Referenced articles

Cover image created with Microsoft Designer