Signal vs. Noise

My approach to software development in four words: Less noise. More signal.

Readers of a certain age will remember over-the-air analog TV signals. If your antenna was picking up a strong signal with no noise, you would have a clear picture on your TV screen. On the other hand, if you had a weak signal with a lot of noise, you just saw "snow."

This concept of signal vs. noise is a useful metaphor. We can apply the concept to several areas of software development: code comments, version control revisions, big data, etc.

A useful definition

If we're going to use this metaphor and apply it in a meaningful way, we need to clarify exactly what these two terms mean in the context of the metaphor:

Signal: information that conveys meaning

Noise: items of no value that obscure useful information

Let's break this down further with a few examples.

Code comments

- Signal: an explanation of the intent behind an unusual block of code; the why

- Noise: a description of what a simple block of code is doing; the how

Version control

- Signal: changes to the code that alter the behavior of the program

- Noise: changes that don't alter behavior, like variable casing in VBA

The signal-noise relationship

Signal is not simply the inverse of noise. The two variables are independent.

This is important. We often assume that the presence of signal indicates an absence of noise and vice versa.

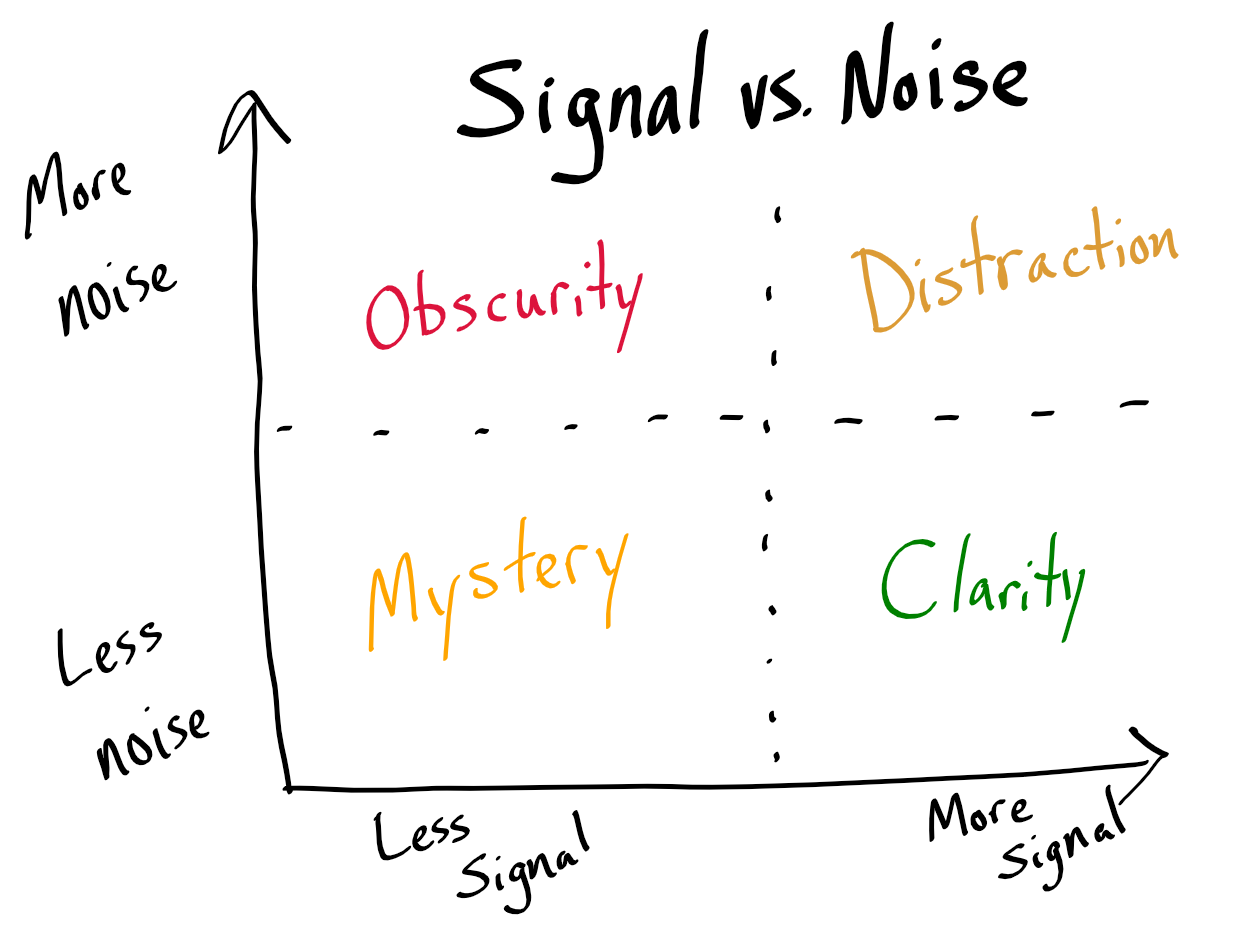

Refer to the chart at the top of this post. To aid in our discussion, I've labeled the four quadrants.

Mystery

In the bottom left, we have a situation with no noise or signal. There's nothing to distract us from the information that isn't there.

Obscurity

In the top left, we have the worst of both worlds. Lots of noise. Very little signal. Verbose logging is a good example of this phenomenon. If you've ever looked at a raw web server log, unfiltered ProcMon results, or a Wireshark capture, then you know what I'm talking about.

It's important to note that "very little signal" is worlds apart from "no signal." The three examples I just gave have immeasurable value when troubleshooting. Digging through those resources looking for answers to sticky problems is long, difficult work but with a highly valuable payoff. It's just like diamond mining, but without the child soldiers.

Distraction

In the top right, we have lots of signal and lots of noise. The easiest way to end up in this situation is to adopt the pack rat mentality: "never delete anything." Mix one part sunk-cost fallacy with two parts cheap data storage and you have one intoxicating cocktail. If the road to hell is paved with good intentions, it runs right through this quadrant.

Clarity

In the bottom right, we have the ideal to which we should constantly strive. The goal of pure clarity is deceptively difficult to achieve. It requires that we work against several of our human instincts to strip away items of marginal value so that we are left only with the most meaningful bits.

A long list of behavioral obstacles work against our achieving this outcome:

- endowment effect

- loss aversion

- sunk cost fallacy

- status quo bias

- regret aversion

- IKEA effect

- decision fatigue

Why this matters more than ever

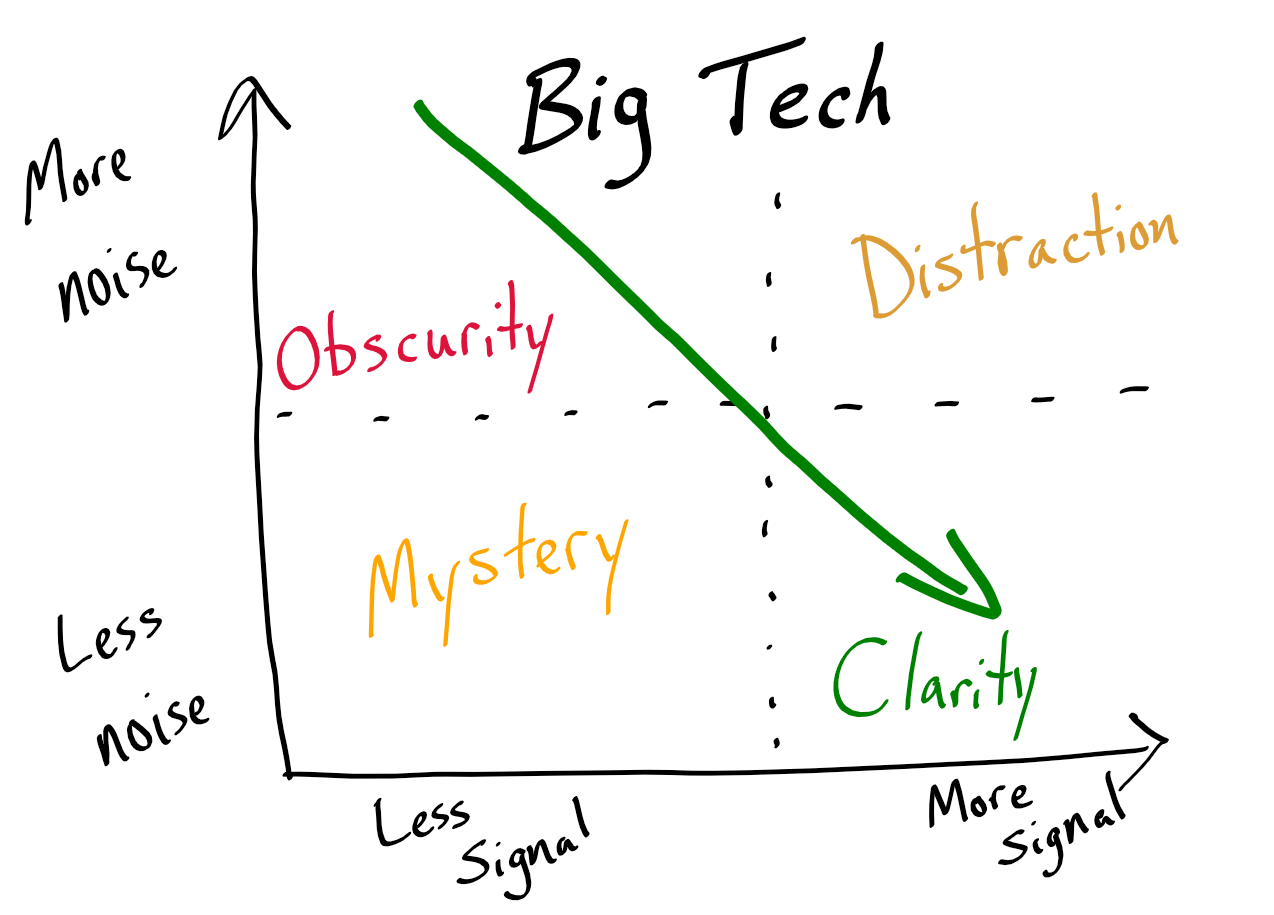

The ever-decreasing cost of data storage is leading to an explosion of data noise. Big tech companies have the resources to apply advanced machine learning techniques that move massive data pools from Obscurity to Clarity.

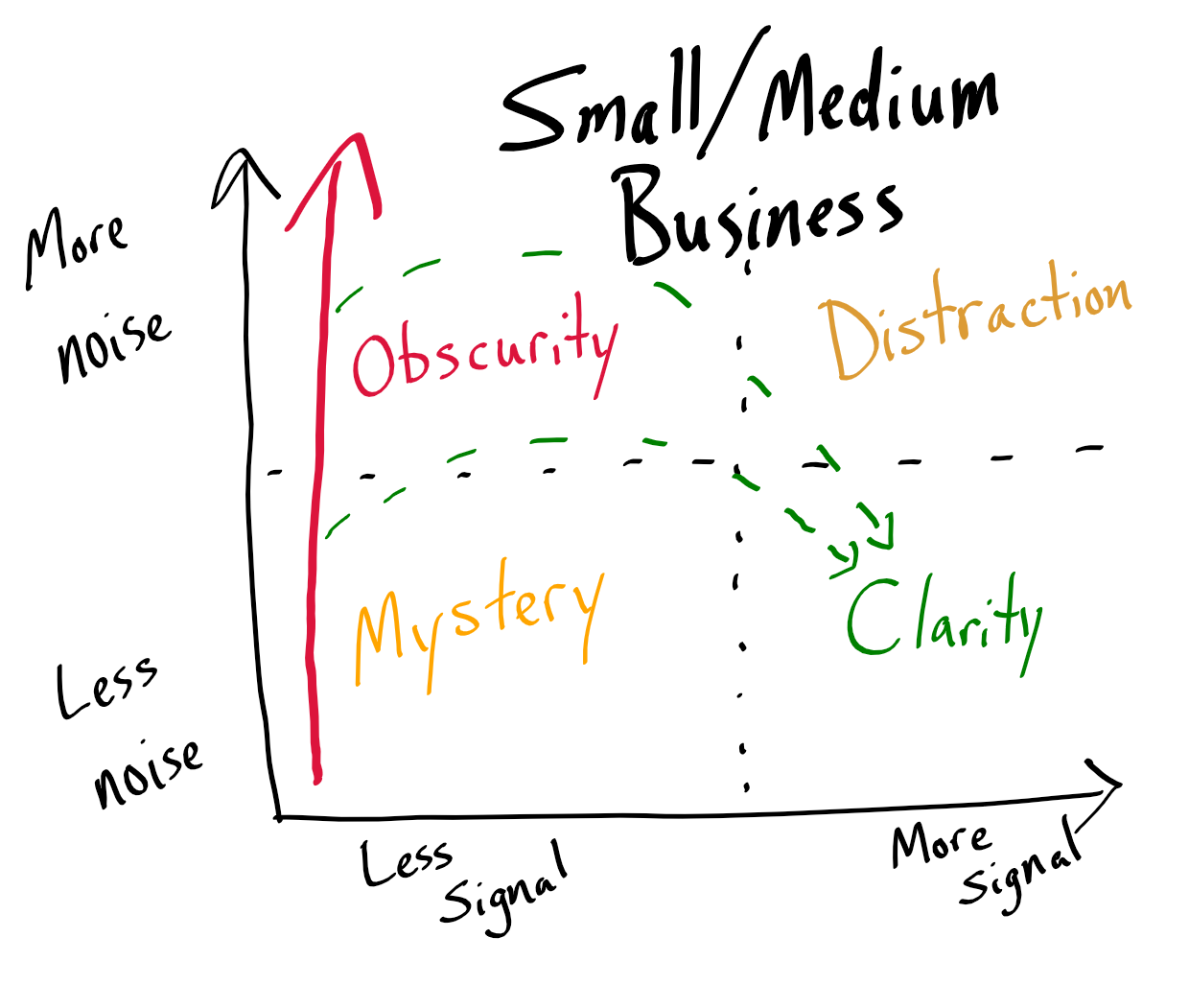

Small and medium businesses, on the other hand, have access to the same cheap storage but not the same resources to effectively mine their data for Signal. So what happens? Companies hoard data so that "some day" they will be able to mine it for useful information. Their optimism bias results in a data strategy that looks like the chart below. Pro tip: don't put faith in dotted lines on charts.

Other applications

As I said at the top, the Signal vs. Noise metaphor is incredibly useful. When you start thinking in these terms, you will see evidence of it everywhere. I plan on doing an entire series of articles on the application of the Signal vs. Noise metaphor. You can follow along using the Signal vs. Noise RSS feed.

Image attribution

- Photo of TV snow by Fran Jacquier on Unsplash

- Photo of TV with rabbit ears by Bruna Araujo on Unsplash

- Hand-drawn charts by Mike Wolfe on NoLongerSet :-)