Reduce Logic Errors in Critical Code

Software Developers Can Almost Eliminate Logic Errors With This Powerful Technique

Logic errors are the most difficult and expensive types of bugs to fix.

Your most complex business logic is often the code that is most important to get right. That's because it probably involves calculations that your end users won't be able to recognize as incorrect.

Users have a tendency to treat such code as infallible and won't question it. This excessive deference means that logic errors will go unnoticed much longer than in other areas of the code.

This is the Software Reliability Paradox.

Why Do Logic Errors Go Unnoticed?

Logic errors go unnoticed because they do not manifest themselves in a tangible way to your users.

The only way a user would notice a logic error is if they knew what the output should be and realized that the program output was different. For many users, though, they assume that the output should be whatever the program generates. In their minds, then, no matter what the program generates, it must be correct!

This thought process reminds me of a scene from Austin Powers:

[Austin Powers]: Hey, there you are!

[Random guy]: Hi, do I know you?

[Austin Powers]: No, but that's where you are. You're there!

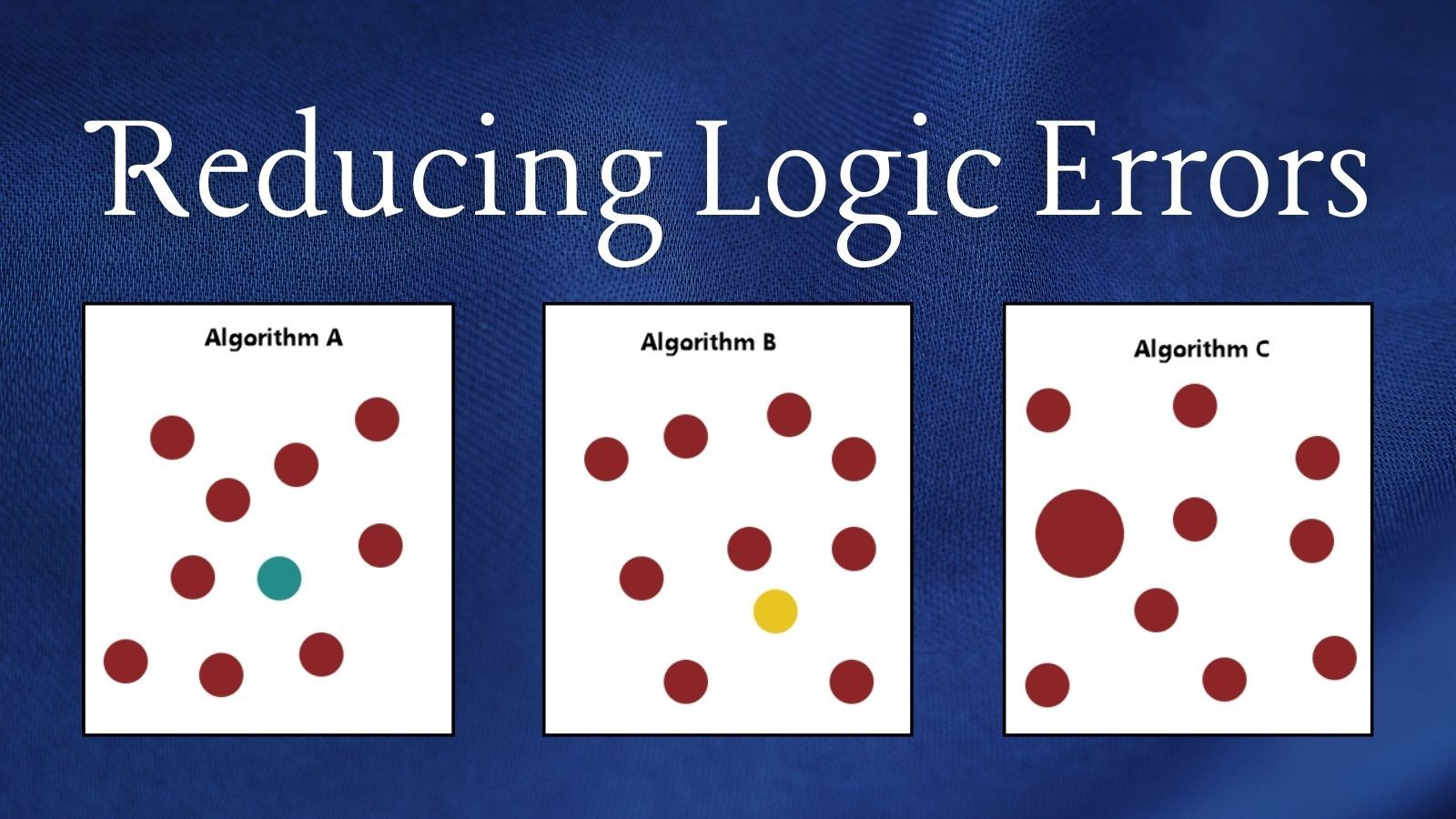

A Visual Example

Whether a user will catch a logic error, then, depends entirely on what it is they are expecting your code to produce.

Consider Algorithm A below. When it works correctly, the algorithm will produce a maroon circle. However, the algorithm contains a logic error. Ten percent of the time, it produces a different colored circle.

If the user is expecting to see a maroon circle, they will immediately notice if the circle is teal. But, if the user is expecting only to see a circle of a specific size, then the logic error will go unnoticed.

This plays out in business applications all the time. Instead of differently colored circles, though, the outputs are usually numbers. And while users may notice an error that's off by an order of magnitude (say, a number in the hundreds vs. one in the millions), they will rarely identify a number that's only off by a small percentage.

That small percentage error might still do lots of damage, though.

Forcing a Double Check

The best way to prevent (or at least identify) logic errors is to build automated double checks into the application.

Let's say the most critical part of our code is this function that generates a circle of a certain size and color. If we use Algorithm A, our code will fail ten percent of the time.

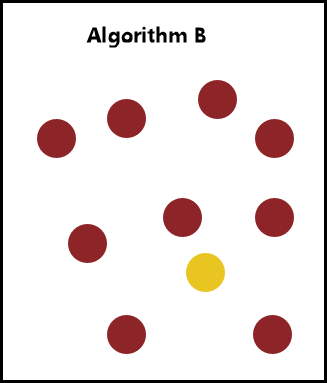

Consider Algorithm B below. Like Algorithm A, it has a ten percent failure rate. The chances of both Algorithm A and B failing at the same time is one percent (0.1 x 0.1 = 0.01).

But even in that much rarer scenario, the result of Algorithm A still won't match the result of Algorithm B. The two algorithms failed with different colored circles. So, the chances of both algorithms failing with the same incorrectly colored circle are some number well below one percent.

But we can do even better.

Improving the Double Check

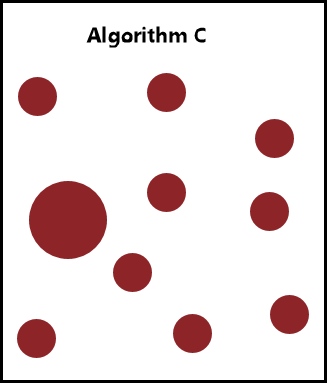

The more different the algorithms we use to arrive at the same outputs, the less likely the algorithms are to fail in the same way.

Algorithm C also has a ten percent failure rate. Unlike Algorithms A and B, though, it has a different kind of failure. The failed circle is the correct color, but it's the wrong size.

If Algorithm A always produces a circle of the correct size, but occasionally the wrong color; and Algorithm C always produces a circle of the correct color, but occasionally the wrong size; then this code will never allow an uncaught logic error.

And that, boys and girls, is how you reduce logic errors in critical code.

Related articles